Introduction

Over the past few years, organizations across every industry have aggressively adopted artificial intelligence (AI). From customer service automation and fraud detection to hiring, diagnostics, and predictive analytics, AI is now deeply embedded in core business operations. Budgets are expanding, tools are evolving rapidly, and innovation cycles are accelerating at an unprecedented pace.

Yet despite this surge in adoption, a significant number of AI initiatives fail to scale, stall after pilot phases, or introduce risks that leadership did not anticipate.

Why does this happen?

The common assumption is that AI transformation is primarily a technology challenge. Organizations invest heavily in infrastructure, hire data scientists, and deploy increasingly advanced models. But in reality, the biggest obstacle is not technical capability.

AI transformation is fundamentally a problem of governance.

The real challenges emerge around:

- Accountability

- Risk ownership

- Ethical boundaries

- Regulatory compliance

- Decision authority

As AI systems begin influencing critical decisions—such as loan approvals, hiring, pricing, or medical diagnoses—the issue shifts from performance to responsibility.

- Who is accountable when AI makes a mistake?

- Who controls how AI is used?

- Who ensures it remains compliant and ethical?

Without governance, AI becomes an uncontrolled force within organizations.

Table of content

Table of Contents

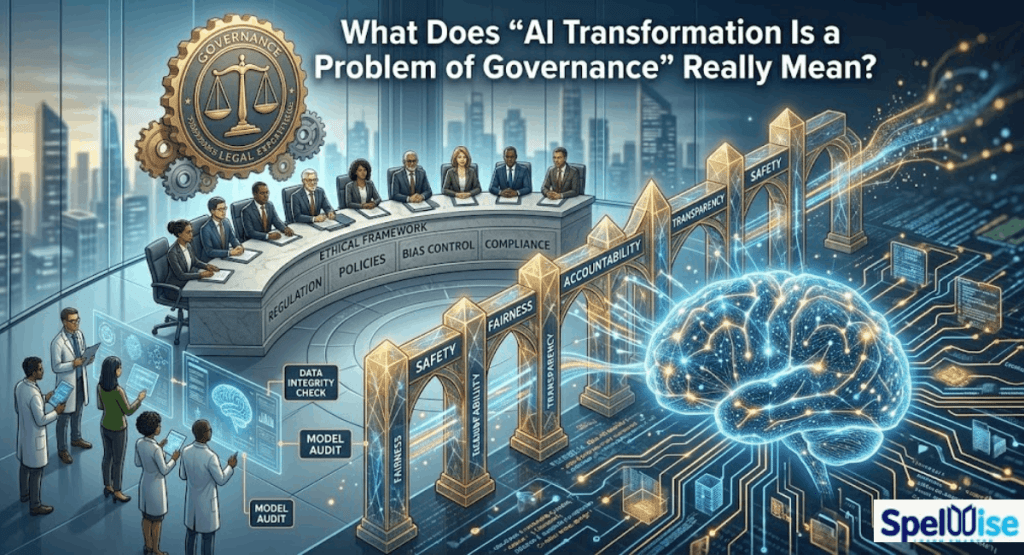

What Does “AI Transformation Is a Problem of Governance” Really Mean?

AI transformation becomes a governance problem when the core challenge shifts from simply building AI systems to effectively controlling, managing, and scaling how those systems are used across the organization.

At a small scale, teams can experiment freely with minimal oversight. Innovation moves quickly, risks are limited, and informal decision-making structures often work well enough.

However, as AI expands across departments, geographies, and critical business workflows, those informal controls begin to break down. What once worked in isolated experiments becomes insufficient in enterprise-wide deployment.

At this stage, governance is no longer optional—it becomes essential. Organizations must establish clear rules, accountability structures, and oversight mechanisms to ensure AI systems are used responsibly, consistently, and in alignment with business goals and regulatory requirements.

Governance vs Management vs Technology

To understand this clearly:

- Technology builds AI systems

- Management operates them

- Governance defines authority, accountability, and oversight

Governance answers critical questions:

- Who approves AI use in high-risk scenarios?

- Who sets acceptable error thresholds?

- Who monitors model performance?

- Who is responsible for failures?

Without clear answers, organizations face confusion, delays, and risk exposure.

Decision Rights in the AI Era

AI fundamentally changes how decisions are made.

Traditionally:

- Humans made decisions

- Reporting lines were clear

Now:

- AI models influence or automate decisions

Examples include:

- Fraud detection systems flag transactions

- Recruitment algorithms rank candidates

- Pricing models dynamically adjust costs

When errors occur, accountability becomes blurred across:

- Data teams

- Product managers

- Legal departments

- Business leaders

Governance defines decision rights before crises occur.

Who Owns AI Risk?

AI introduces multiple layers of risk:

- Legal liability

- Regulatory violations

- Bias and discrimination

- Reputational damage

- Financial loss

- Operational instability

In many organizations, responsibility is unclear:

- IT assumes legal handles compliance

- Legal assumes product owns deployment

- Product assumes data science owns models

Governance eliminates this confusion by assigning clear ownership and escalation paths.

Why AI Changes the Power Structure of Organizations

AI shifts influence within organizations:

- Data teams gain strategic importance

- Algorithms influence executive decisions

- Predictive insights shape investments

This creates a new power structure.

Without governance:

- Authority increases

- Accountability decreases

This imbalance is dangerous. Governance restores equilibrium.

Why AI Transformation Has Become a Governance Crisis in 2026

1. Scale and Autonomy of AI Systems

AI systems now operate at massive scale. A single flawed model can impact millions of decisions instantly.

2. Regulatory Pressure

Global regulations require:

- Risk classification

- Documentation

- Transparency

- Monitoring

Non-compliance leads to severe penalties.

3. Shadow AI Proliferation

Employees increasingly use external AI tools without approval.

Risks include:

- Data leakage

- Compliance violations

- Loss of control

4. Data Fragmentation

Most organizations operate with siloed data systems, leading to:

- Inconsistent outputs

- Poor model performance

- Increased risk

5. Misaligned Executive Incentives

- Innovation teams prioritize speed

- Compliance teams prioritize control

This creates internal conflict that only governance can resolve.

When AI Becomes Transformation (Not Experimentation)

During experimentation:

- Risk is low

- Data is controlled

- Impact is limited

During transformation:

- AI is embedded in core operations

- Real data is used

- Decisions have real consequences

This shift requires:

- Strong governance

- Defined ownership

- Continuous monitoring

Why Most Transformations Fail: The Governance Model Problem

A critical but often overlooked issue is that many organizations govern transformation incorrectly.

They treat transformation like a project.

This creates a fundamental mismatch.

When transformation is governed as a project:

- It appears structured

- It looks successful

- But it fails to deliver real change

Think of it like running an airline by optimizing individual flights. Every flight may depart on time, every crew may meet targets, and every schedule may be followed—but the airline can still fail as a business.

That is exactly what happens in many AI transformations.

Project Governance vs Transformation Governance

Scope

- Project: Fixed, controlled, predefined

- Transformation: Evolving, strategy-driven

Authority

- Project: Execution-focused, passive tracking

- Transformation: Active decision-making, resource control

Success

- Project: On time, on budget, within scope

- Transformation: New capabilities and outcomes

End Point

- Project: Defined finish line

- Transformation: Continuous evolution

Ownership

- Project: Intermittent sponsorship

- Transformation: Constant, active accountability

This difference is not small—it represents an entirely different operating model.

Organizations that fail to recognize this distinction end up with:

- Completed projects

- Green dashboards

- No real transformation

The issue is not poor governance—it is misapplied governance.

Core Components of AI Governance

AI governance is a system of controls and structures that define how AI operates within an organization.

Key Components

| Component | Purpose |

| Data Governance | Controls data usage and security |

| Access Control | Defines who can use AI |

| Model Governance | Evaluates AI systems |

| Usage Governance | Sets boundaries |

| Output Validation | Ensures accuracy |

| Monitoring | Tracks system behavior |

| Auditability | Maintains records |

AI Governance vs Compliance vs Security

These are related but distinct:

- Governance → Defines internal control and decision-making

- Compliance → Ensures alignment with laws

- Security → Protects systems and data

Most failures occur when these areas are disconnected.

Common Breakdown Points in AI Governance

Governance failures typically start small and escalate:

- Policy vs reality gaps

- Lack of visibility

- Data exposure risks

- Unclear ownership

- Governance lagging behind adoption

These issues compound as AI scales.

AI Governance Across Regions: U.S. vs E.U.

European Union

- Risk-based regulation

- Strict compliance

- High transparency requirements

United States

- Flexible approach

- Sector-specific rules

- Focus on harm prevention

Global Challenge

Organizations must:

- Adapt to different regulations

- Maintain consistent governance

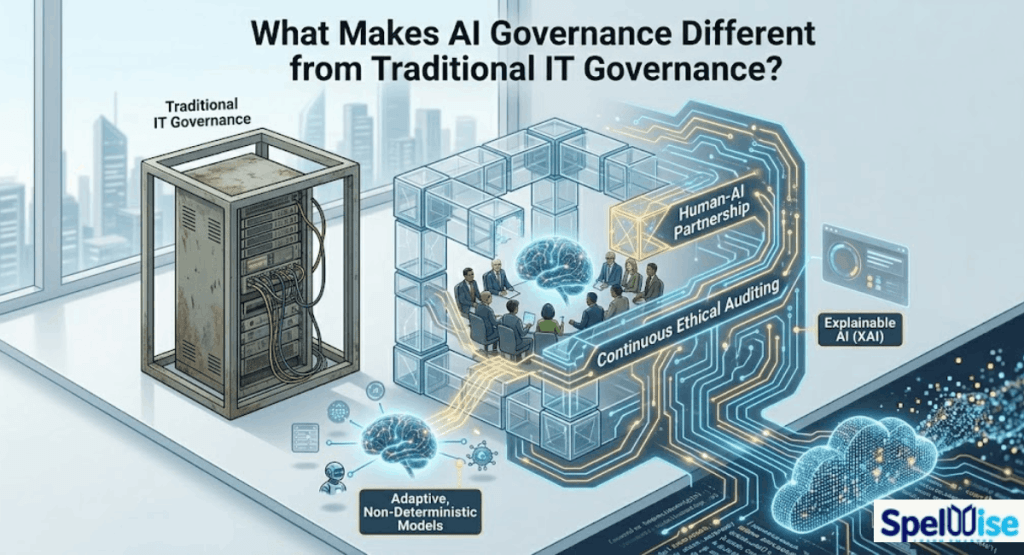

What Makes AI Governance Different from Traditional IT Governance?

AI systems are fundamentally different from traditional IT systems, and this difference is exactly why conventional governance models are no longer sufficient.

Traditional IT governance is built around predictable, rule-based systems. These systems behave consistently, follow predefined logic, and produce the same output for the same input. As a result, governance in traditional IT focuses on stability, security, and control through fixed policies and periodic audits.

AI systems, however, operate in a completely different way.

They are dynamic, data-driven, and often probabilistic. Instead of following fixed rules, AI models learn from data, adapt over time, and generate outputs that may vary depending on context, input quality, and evolving patterns. This introduces a level of complexity and uncertainty that traditional governance frameworks were never designed to handle.

Unlike static systems, AI models can drift, degrade, or change behavior as new data is introduced. This means governance cannot be a one-time activity—it must be continuous, adaptive, and deeply integrated into the entire lifecycle of the system.

Additionally, AI introduces entirely new categories of risk that go beyond technical performance. Issues such as bias, fairness, explainability, and ethical decision-making become central concerns. A system can be technically accurate yet still be unacceptable from a legal, ethical, or reputational standpoint.

Another major difference is the need for transparency. Traditional systems can often be audited through code and logs, but AI systems—especially complex models—can behave like “black boxes.” Governance must therefore ensure explainability and interpretability so that decisions can be understood and justified by stakeholders, regulators, and affected users.

Furthermore, AI governance requires real-time monitoring rather than periodic reviews. Because AI systems operate continuously and at scale, risks can emerge and propagate quickly. Organizations must implement ongoing validation, performance tracking, and risk assessment mechanisms to maintain control.

In short, while traditional IT governance focuses on controlling systems, AI governance focuses on controlling outcomes, decisions, and impact. It requires a shift from static oversight to continuous accountability, from technical compliance to ethical responsibility, and from isolated control to enterprise-wide coordination.

1. Systems Learn and Evolve

Governance must adapt continuously.

2. Unpredictability

Outputs can vary in unexpected ways.

3. Ethical Risks

Bias and fairness become critical.

4. Continuous Monitoring

Static audits are no longer sufficient.

The Core Pillars of Effective AI Governance

1. Data Governance and Sovereignty

Ensures:

- Data quality

- Privacy compliance

- Secure access

2. Model Lifecycle Governance

Covers:

- Validation

- Testing

- Deployment

- Monitoring

- Retirement

3. Risk & Compliance Integration

AI risk must be embedded in enterprise risk strategy.

4. Human-in-the-Loop Oversight

Critical decisions require human intervention.

5. Transparency and Explainability

AI decisions must be understandable.

6. Performance Accountability

AI systems must have measurable KPIs.

Building Trustworthy AI: A Risk-Based Governance Approach

AI’s power comes with risk. Poorly managed systems can:

- Amplify bias

- Violate security policies

- Produce harmful or incorrect decisions

To manage this, organizations need structured frameworks.

A strong governance approach ensures AI systems are:

- Valid and accurate

- Reliable and consistent

- Safe for users and society

- Secure and resilient against attacks

- Explainable and interpretable

- Privacy-preserving

- Fair and unbiased

- Accountable and transparent

Without these characteristics, AI cannot be trusted.

The AI Risk Management Lifecycle

An effective governance system follows a continuous lifecycle:

1. Govern

This defines culture, policies, and expectations.

- Establish principles

- Define acceptable use

- Ensure compliance

Governance influences everything else.

2. Map

This stage builds context:

- Identify stakeholders

- Define roles

- Understand system usage

- Set goals

- Define risk tolerance

Without context, risk cannot be understood.

3. Measure

Risk must be evaluated using:

- Quantitative methods (data-driven)

- Qualitative assessments (high/medium/low)

Also includes:

- Testing

- Validation

- System analysis

This ensures visibility across the entire lifecycle.

4. Manage

This is where action happens:

- Prioritize risks

- Mitigate where possible

- Accept or transfer when necessary

- Continuously monitor

This creates a cycle of continuous improvement.

The AI Governance Maturity Model

Organizations evolve through stages:

- Ad Hoc Usage – No control

- Controlled Experiments – Limited governance

- Structured Framework – Defined policies

- Enterprise Integration – Organization-wide governance

- Strategic Advantage – Governance as competitive edge

The Governance Gaps Killing AI Strategies

Common gaps include:

- No clear AI ownership

- Weak board-level oversight

- Inconsistent data standards

- Lack of model accountability

- Poor escalation processes

- Ethical principles without enforcement

- AI treated as IT instead of enterprise risk

The Role of the Board and Executive Leadership

Leadership must shift from:

“Can we deploy this AI?”

To:

“Should we deploy this AI?”

Responsibilities

- Define AI risk appetite

- Assign ownership

- Ensure compliance

- Align innovation with governance

Linking AI Governance to Business Results

Strong governance delivers measurable outcomes:

- Reduced legal risk

- Improved trust

- Better performance

- Increased scalability

- Higher investor confidence

Governance enables innovation—it does not block it.

Shadow AI: The Hidden Threat

Shadow AI refers to unauthorized AI usage.

Risks

- Data leaks

- Biased outputs

- Compliance violations

Solutions

- Provide secure alternatives

- Monitor usage

- Educate employees

Step-by-Step Roadmap to Build an AI Governance Framework

- Identify AI use cases

- Classify risk levels

- Define enforceable policies

- Assign ownership

- Implement controls

- Monitor usage

- Audit and improve continuously

AI Governance Checklist: What’s Your Score?

✔ AI usage is visible

✔ Risk classification exists

✔ Data access is controlled

✔ Policies are enforced

✔ External tools are tracked

✔ Monitoring is active

✔ Outputs are validated

✔ Audit logs exist

Scoring:

- 0–3 → High risk

- 4–7 → Moderate governance

- 8–11 → Strong governance

What Happens Without AI Governance?

Organizations face:

- Regulatory penalties

- Financial loss

- Reputational damage

- Bias and discrimination

- Strategic failure

AI amplifies outcomes—both good and bad.

Building Governance as a Strategic Advantage

Organizations with strong governance achieve:

- Faster innovation

- Greater trust

- Better scalability

- Competitive advantage

Governance is not a limitation—it is a growth enabler.

Practical Steps for Enterprises

- Start with high-impact use cases

- Map workflows and decision points

- Define ethical and operational policies

- Integrate human oversight

- Measure performance and risk

- Continuously improve frameworks

FAQs

What does it mean that AI transformation is a problem of governance?

It means the main challenge is controlling how AI is used—not building it.

Why do AI initiatives fail without governance?

Because usage scales faster than control, leading to risk and inconsistency.

What changes when AI moves from experimentation to transformation?

AI becomes embedded in real workflows, increasing impact and risk.

How should leaders approach AI governance?

Focus on visibility, accountability, control, and continuous monitoring.

What is the biggest governance gap today?

The gap between policy and real-world enforcement.

Conclusion: Governance Is the Real Competitive Advantage

The question is no longer whether organizations will adopt AI—they already have.

The real question is:

Can they govern it effectively?

AI transformation is a governance problem because it:

- Redefines decision-making

- Redistributes power

- Amplifies risk and impact

Technology creates capability.

Governance creates control.

Organizations that treat governance as a strategic priority—not just compliance—will build:

- Trustworthy systems

- Scalable AI operations

- Long-term competitive advantage

In the AI-driven future, success will not belong to those with the smartest algorithms.

It will belong to those with the strongest governance.